Understanding AI Music Analysis: The Audio-First Advantage for Indie Artists

73% of independent artists now use AI music tools.

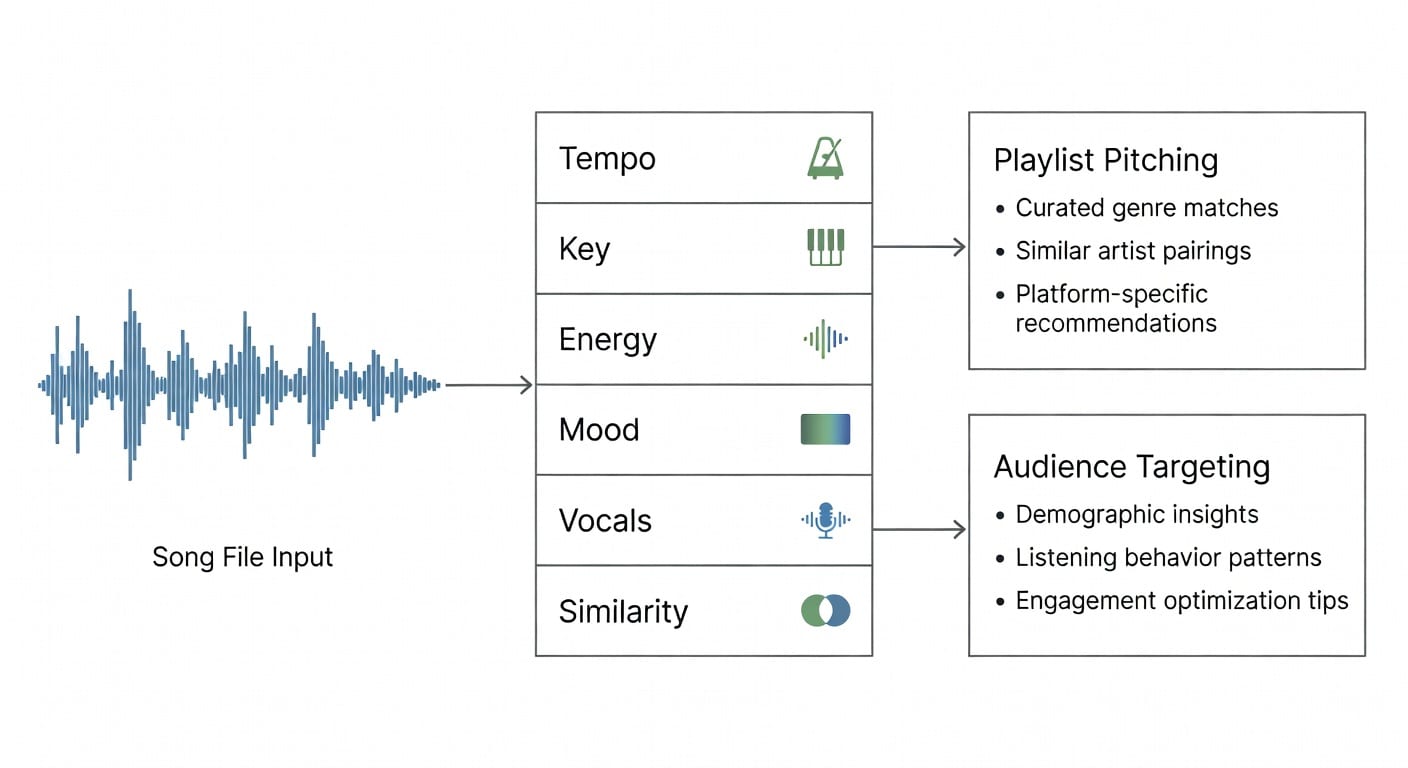

AI music analysis uses audio waveforms to optimize Spotify pitching, playlisting, and audience targeting.

The Evolution of Music Analysis: From Metadata to Audio-First

For years, independent musicians relied heavily on text-based metadata to categorize their tracks. If you wanted to pitch a song, you manually typed in genres, moods, and similar artists. However, as the streaming landscape has grown more competitive, foundational music industry marketing strategies have had to evolve. Today, the prerequisite for effective promotion isn't just knowing your target audience—it is understanding exactly how machines "hear" your music.

This shift has given rise to audio-first AI. Instead of reading the tags an artist types into a form, modern AI music analysis listens to the actual acoustic properties of the file. It measures tempo, dynamic range, frequency distribution, and instrumentation to determine where a song truly belongs in the digital ecosystem.

Core Concepts of Audio-First AI

To fully leverage AI music analysis, indie artists must understand four core concepts that drive modern streaming success:

- Audio-First Analysis: This is the foundation. The AI processes the raw audio waveform rather than relying on user-generated text tags, ensuring an objective evaluation of the track's sonic profile.

- Genre-Authentic Matching: By comparing a track's acoustic signature against millions of other songs, the AI identifies the exact micro-genres it fits into, preventing misclassification that can hurt algorithmic performance.

- Playlist Scoring: This metric evaluates how well a specific track matches the sonic identity of a target playlist, giving artists a data-backed probability of acceptance before they pitch.

- Star Moment Detection: AI identifies the most engaging, high-retention segments of a song (the "Star Moments"). This is crucial for creating short-form video content for TikTok or Instagram Reels.

Platforms like PitchPlus use this audio-first AI to provide these exact features, helping artists define their category without relying on outdated metadata tools.

Practical Application: Pitching and Playlisting

Knowing how to apply AI analysis is what separates successful campaigns from wasted effort. When preparing for a release, artists can use audio analysis to generate highly accurate, genre-authentic pitches. Instead of guessing which curators to contact, you can use playlist scoring to target curators whose sonic preferences perfectly align with your track.

Understanding these audio metrics is a critical step to master Spotify's algorithm. Spotify's BaRT recommendation system relies heavily on listener retention. By using Star Moment detection to find the catchiest 15 seconds of your song, you can drive high-quality traffic from social media directly to your Spotify profile, increasing your save rates and lowering skip rates.

Advanced Techniques for Indie Artists

Advanced AI music analysis isn't just for major labels with massive budgets. The current landscape emphasizes a pay-as-you-go indie focus, allowing independent artists to access enterprise-level audio intelligence without being locked into expensive monthly subscriptions.

One advanced technique is using genre-authentic optimization to A/B test different mixes of a track before the final master. By running different versions through an audio AI tool, artists can see which mix scores higher for their target editorial playlists. Additionally, integrating these insights with a comprehensive music analytics guide allows artists to correlate their audio scores with actual streaming revenue and listener drop-off rates.

Expert Tips: Audio AI vs. Metadata Tools

Metadata is subjective; an artist might tag their song as "Lo-Fi Indie Pop," but an audio-first AI might reveal its acoustic signature aligns much closer to "Bedroom R&B."

When pitching to curators or running ad campaigns, authenticity is everything. Experts recommend abandoning generic metadata tagging in favor of audio-first insights. Use the exact micro-genres and mood descriptors generated by the AI to craft your pitch emails. Furthermore, always build your social media promotional assets around the AI-identified Star Moments, as these segments have been mathematically proven to capture and hold listener attention.

Frequently Asked Questions

What is the difference between audio-first AI and metadata analysis?

Metadata analysis relies on text tags manually entered by the user, which can be subjective and inaccurate. Audio-first AI analyzes the actual soundwaves of the music, measuring acoustic traits like tempo and energy for objective, highly accurate categorization.

How does Star Moment detection help indie artists?

Star Moment detection identifies the most engaging and catchy segments of a song. Artists use these specific timestamps to create highly effective short-form content for TikTok, Instagram Reels, and YouTube Shorts, driving better conversion to streaming platforms.

Is AI music analysis affordable for independent musicians?

Yes. Modern platforms like PitchPlus offer a pay-as-you-go model specifically designed for indie artists and labels. This allows musicians to access advanced playlist scoring and genre-authentic pitching tools without committing to expensive, long-term subscriptions.

Stop the Three-Pillar Failure Cycle

Identify your song's Viral Hook and build the complete professional package.

Analyze Your Track